Some amazing — as well as some downright freaky — innovations in artificial intelligence and machine learning were detailed at the recent Decoded Future NYC Summit, presented by Stylus. Take, for example, technology that will watch consumer faces as they shop online, recording smiles, frowns, or other gestures to measure interest in a product. In the near future, consumers could also be interacting “face-to-face” with virtual assistants machines that have lifelike personalities, emotional responses and character.[quote]

While those advances may be a few years away, AI and machine learning have already become customary among brands and retailers who are looking to track the right trends in style, color, and pricing. The major players have been taking advantage of this technology for years. But now, thanks to advancements in the field, mom and pop stores can also use it to compete.

“AI and cloud create a whole range of possibilities,” says Kishore Rajgopal, founder and CEO of NextOrbit, a predictive algorithm firm for retailers and consumer brands. “One of our missions is to offer enterprise-grade functionality and features at mid-market prices. Small mom and pop stores do not require extensive infrastructure. AI can provide a data-driven foundation in the form of a shared platform for demand forecasting and order that can save a lot of their manual work.”

Rajgopal says NextOrbit can provide location-based pricing, better customer understanding, changes in trends at a micro level, and suggestive actions. These, he says, are vital roles that AI can provide to retailers as they realize changes in the store environment.

“Through AI, even the smallest detail can be analyzed to always keep the store on a positive front,” Rajgopal says. “It helps a retailer to be nimble in this era of digitalization.”

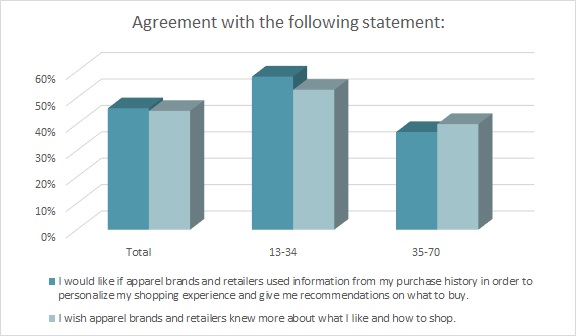

Nearly half of all consumers (45 percent) say they wish apparel brands “knew more about what I like and how I shop,” according to the Cotton Incorporated Lifestyle Monitor™ Survey. Additionally, 46 percent say they would like apparel brands to use information from their purchase history in order to personalize their shopping experience and give them recommendations on what to buy.

Overall, most consumers (66 percent) think retailers should focus more on improving customer service both in-store and online, according to Monitor™ research, and having better knowledge about consumer likes and dislikes feeds into that.

The Collage Group, a consumer research and strategic advisory company, is working on another way to better connect with consumers. The firm is using machine learning and facial tracking to help brands understand how to better represent and reach audiences of color in TV ads and online video content. The technology reveals critical differences in emotional responses to ads in different demographics that tell brand marketers where and why their ads have appeal, or not.

“At Collage Group, we have been using facial expression analysis to more deeply understand how consumers from different cultural backgrounds (race, ethnicity, generation and gender) process ads differently,” says David Evans, chief product office. “For example, consider the Levi’s ‘Circles’ ad from 2017: a montage of circle dances, featuring a wide variety of religious, ethnic, subcultural and sexual identities, all wearing Levi’s jeans. We found significant differences between how multicultural and white consumers responded. [There was] generally a strong positive response from multiculturals, but a split response among whites into two distinctly positive or negative groups.

“We also combined that analysis with machine learning, which allows us to take an understanding of norms to a whole new level. Machine learning allows us to evaluate not only whether an ad does better than the average ad in the category on a variety of features, but also how important each of the features is to outcomes such as brand favorability or purchase intent,” Evans explains. “For the Circles ad, we discovered that indeed the drivers of brand favorability are in fact quite different for different groups, with African Americans responding more to people and characters, and Hispanics responding more to visual and images.”

Such technology can help brands hone their messages and perhaps inspire loyalty among consumers. That’s becoming more of an issue as consumers have more places to shop and receive messaging from more platforms than ever. Nearly half of all consumers (47 percent) admit to being less loyal to clothing brands than they were a few years ago, according to Monitor™ data. The figure increases significantly to 55 percent among Millennials aged 25-to-34.

Fashion brands and retailers may find it heartening to learn that consumers feel certain types of technology would inspire them to actually buy clothes from a store. Almost half (46 percent) would like to see a virtual reality experience in-store, while 54 percent would like to have a personalized shopping experience with collections pulled together based on previous purchases and preferences, according to Monitor™ research. Another 57 percent would like to see augmented reality visualization, which allows customers to see how an outfit will look without having to go to a store and try an item on.

At the Decoded Future summit, Stylus’ Saisangeeth Daswani, head of advisory for fashion, beauty, and APAC (Asia Pacific), described just such a face-swapping tool called Super Personal. It pairs the user’s face to a very similar body type so consumers can virtually see how an outfit will look, providing a realistic dressing room experience without all the hassle.

“To really change the status quo, businesses will start to become more cooperative,” Daswani said. “I think adaptable brands that are really attuned to some of these new mindsets are better prepared to operate in new ways that ultimately will be the ones that will start to thrive from now all the way to the future into 2035.”